Inside the Mind of Alan Wake 2: A Series

Inside the Mind of Alan Wake 2 is a deep dive into the creative and technical processes that shaped the game’s audio experience. This blog series explores the meticulous decisions, challenges, and innovations behind Alan Wake 2’s soundscape. From crafting dynamic dialogue and immersive Echoes to the vital role of audio QA and the game’s unique profiling system, step inside the minds of the audio team who brought this world to life.

In this first installment, Senior Dialogue Designers Arthur Tisseront & Taneli Suoranta take us into the depths of Alan Wake 2’s dialogue implementation, breaking down the interplay of spoken and narrated dialogue and how audio design helped maintain clarity in a world where reality is constantly shifting.

Introduction: Distinguishing Dialogue

Alan Wake 2 presented quite a few unique challenges when it came to dialogue implementation. A game about a writer and stories coming to life, narrated by two different playable characters (Saga Anderson and Alan Wake), with a constant interplay of narrated and spoken dialogue, all of which was not necessarily constrained by the laws of baseline reality, meant that we had a lot of freedom to play around with implementation to get things feeling both right in the context of the story, and interesting and informative for the player.

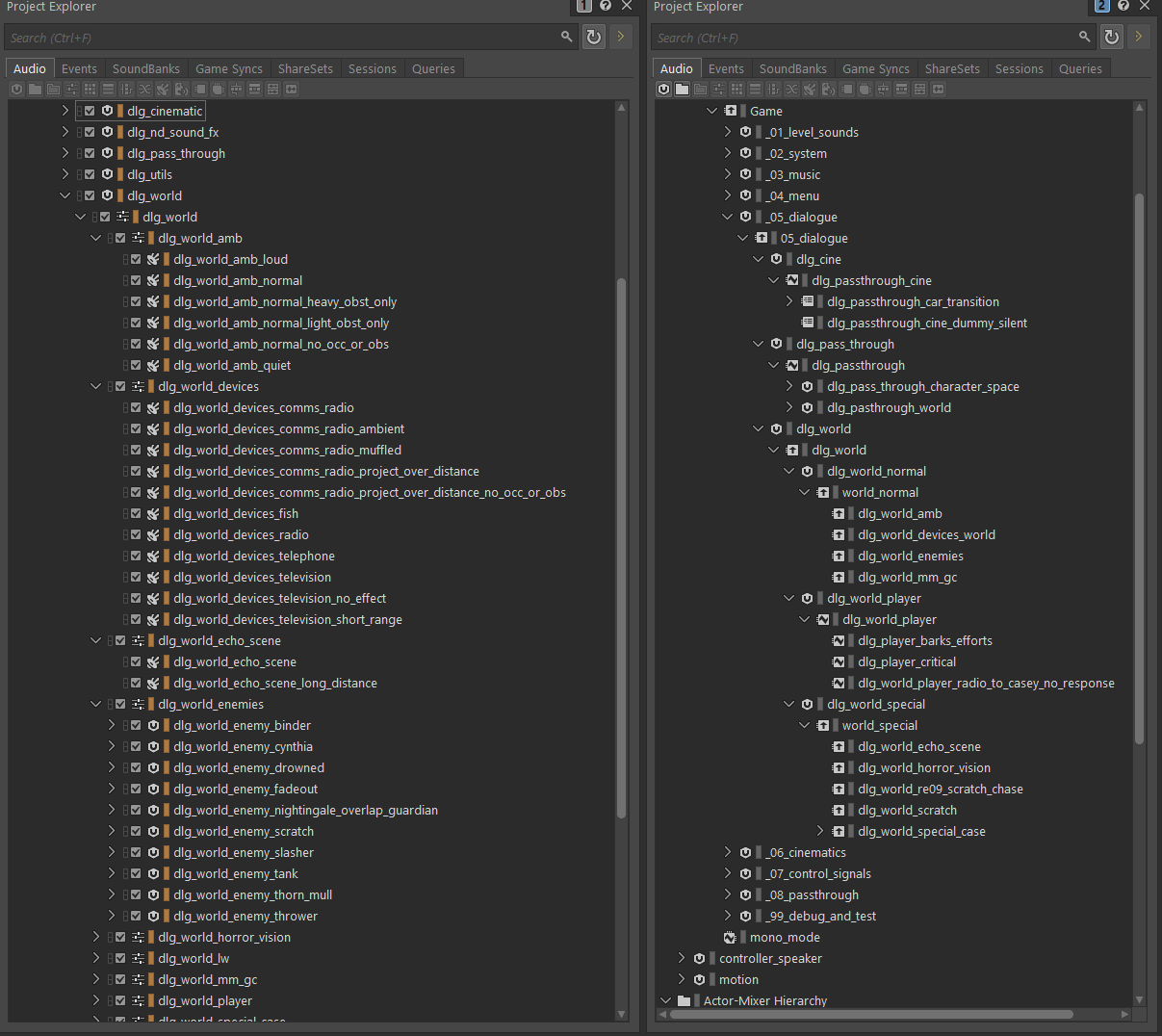

Wwise Structure

The way dialogue is integrated with Remedy’s Northlight engine allows us to use external source files – no in-game dialogue files live in Wwise itself. Wwise is basically a way for us to have very granular control over what categories of spatialization and effect buses each line of dialogue gets, while still being able to manage the files elsewhere as we see fit. We use internal tools to assign lines of dialogue to Wwise sound events (which we call dialogue types), and those define how those lines should be spatialized at runtime. This allowed us to have most of the dialogue follow the same blanket rules for attenuation, obstruction, and other game conditions, while still being able to account for edge cases by adding a new sound event with the settings we needed (for example, to bypass occlusion on a specific set of lines). This also led to a simpler overall bus structure.

The use of external source in this way allowed us to keep the Wwise project lean and quick to load – we had many thousands of lines in our external source folders, around 27 thousand in total per language, that did not need to be loaded when Wwise was opened.

The sole exception to this was cinematics – all cinematics in the game are processed offline and played back via unique Wwise events, one per cinematic, with dialogue in 7.1 format routed to a 7.1.4 output bus. This was done to always ensure accurate sync to picture and the highest possible mix quality during cinematic moments, as well as allowing us to perform the fun offline processing/SFX interplay that you can hear in those moments.

Because of this use of external sources, we could not mix individual dialogue assets within Wwise itself. During production, it was extremely important that assets were pre-mixed to our specs before going through Wwise – and, as we had limited time to do the final mix of the game, having pre-mixed assets to spec after recording sessions was critical. In this sense, the mix was done iteratively during the implementation process – there was no real need for a final dialogue mixing pass in Wwise, outside of blanket adjustments to dialogue types.

What is Narration, Really?

To demonstrate how we used these dialogue types, let’s start with the split between narration and spoken dialogue. To us, these meant:

- Spoken Dialogue: A character is speaking out loud, moving their mouth physically.

- Narration: A character is speaking their thoughts inside their own head, or a character is narrating the story as it happens.

These were not our only two categories of dialogue, but they were the most pivotal to the game making sense.

We wanted to dive as deeply as we could into this distinction so that it was always 100% clear to the player who was talking and whether they were talking out loud. Not only that, but we could sometimes have multiple “narrators” in a single scene – for example, Alan narrating from the Dark Place while Saga narrates her inner thoughts in the same part of the game, in addition to any outspoken dialogue, as in this gameplay snippet:

In order, we hear:

- Saga speaking out loud

- Saga narrating her thoughts

- Alan narrating the story from the Dark Place

- Saga narrating her thoughts again

This clip should give some indication as to why we needed these distinctions.

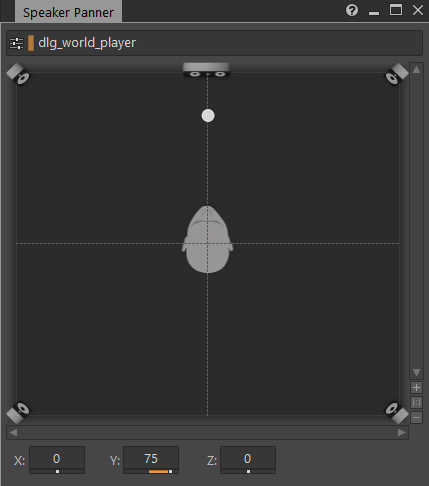

Spoken dialogue always plays spatialized to the player character’s head, and has game-defined reverb sends enabled. This already gets us a pretty good distinction between the two types. We also pushed spoken dialogue forward in Wwise’s steering panner to enhance this effect further.

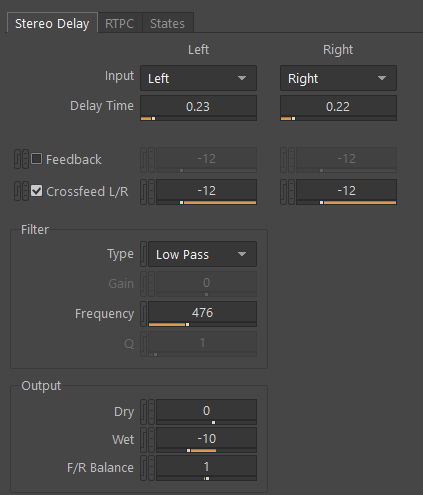

In addition to this, spoken and narrated dialogue were mastered differently before entering Wwise. Matthew Porretta, the voice of Alan, has a wonderful-feeling resonant node in his voice at around 110hz. This was emphasized for his narrated dialogue via multiband compression and some careful EQ and was turned down for his spoken dialogue – we did similar things for Saga and her two types of dialogue. Saga and Alan’s narration also has some online processing – a simple Wwise stereo delay at a very low volume.

All these little things put together helped make it clear which dialogue was being spoken to the world and which was narrated – but we didn’t stop there.

Breathing

In addition to the dialogue itself, we chose to create a breathing system for each playable character to help connect them to the world even more. Saga and Alan both breathe constantly throughout the game, as a person would, with pace and tone of breaths appropriate to the situation they’re in. We were able to leverage Wwise’s auto-ducking and game states to make narration and spoken dialogue even more distinct: spoken dialogue kills the character breathing loop for its duration, then restarts the breathing after a short delay. Narrated dialogue does not. It’s a subtle distinction, but one that helps the player intuit whether the character is speaking aloud or not.

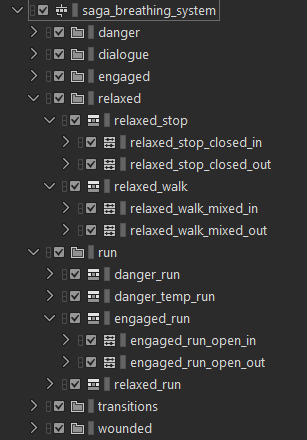

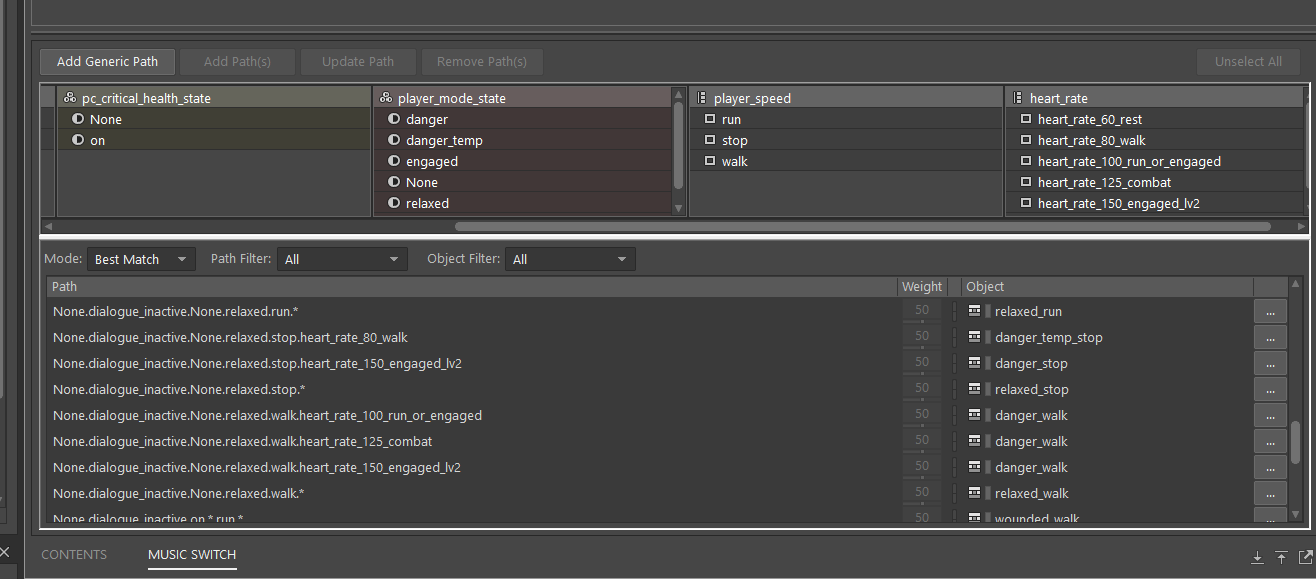

The breathing system itself lives in the music hierarchy, with each breath as its own individual music clip. It has three primary states – relaxed, danger, and engaged, with stop (standing still), walk, and run variants of each, in addition to a “wounded” state when the player is at low health.

Every state can transition seamlessly into every other state using Wwise’s interactive music transition system, and the player characters will “cool down” after running for a bit or transitioning from a high to low intensity state, emulating how people need to catch their breath after physical exertion.

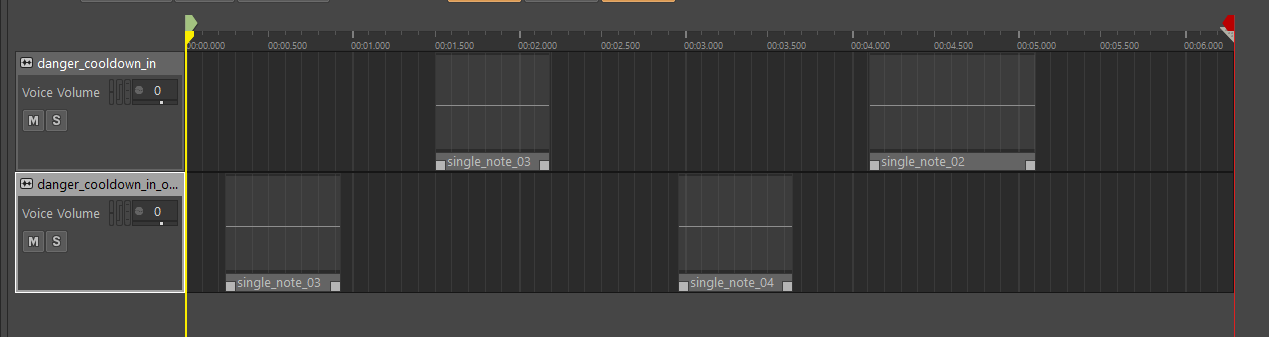

A cooldown is a select set of breaths from each respective type that sounds like the character is slowing their exertion down, and is manually timed and sequenced like so:

Alan gets winded much quicker and breathes much rougher than Saga, since he is, as you might expect, quite out of shape compared to a trained FBI agent. All of this is connected to the player character’s heart rate value, which is raised by actions such as running and being attacked and lowered in calm areas – a higher heart rate causes breaths to play back faster. Each player character had a little over 1,000 individual breath assets to support this system, with a range of ins, outs, and open and closed mouth assets for variety.

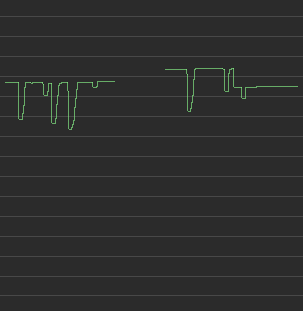

We also added a sidechain from the player footsteps to this breathing loop – each step quickly ducks the player’s breathing, with jogging and running causing the heaviest effect. This aimed to emulate what happens when a person shifts more air out of their lungs during heavy footfalls. While it’s technically the opposite effect (in life, this manifests as more air coming out during a footfall), the rhythm that it imparts to the breathing is similar, and the effect of increasing the volume with a footfall didn’t feel as good to us when tested. The voice volume graph of a breath looks like this when the player is running:

In the mix, breaths are often barely audible – we wanted them to be felt more than they were heard, outside of tense moments in quiet areas. Below is a video of the game with breaths significantly boosted in volume so you can hear how they play back and how some of these systemic choices manifest in-game.

We feel that this attention to how we connected the player characters to the worlds they inhabit helped establish a stronger connection between them and the player, making the ways the story twists and turns around them affect the player themselves even more.

We wanted to make sure that enemies were adding to these twists and turns in their own way, too.

Dialogue as Ambience – Fadeouts and the Dark Place

In Alan’s section of the game, the player traverses the Dark Place – a surreal nightmare dimension created from Alan’s own stories. As such, he’s haunted by echoes of himself – shadows in the corners of his vision. These “Fadeouts” speak his words back to him constantly at a whisper level, until they become aggressive – after which, they start yelling.

One of the biggest challenges while creating the atmosphere of the Dark Place was implementing the whispers in a way that didn’t become annoying over time – we sometimes had dozens of Fadeouts in a single area, so avoiding a deluge of constant whispering was important – that’d kill the tension completely. We also wanted to make sure that the player was never quite sure where the shadows were until they were right on top of them, so the whispers couldn’t be too loud at any given time. The challenge here was that, beyond getting the pacing of the whispers right in the system we used to drive them, they could appear anywhere in the Dark Place – outdoors or indoors – and in any number. We could not guarantee that their audible attenuation range would work in every situation.

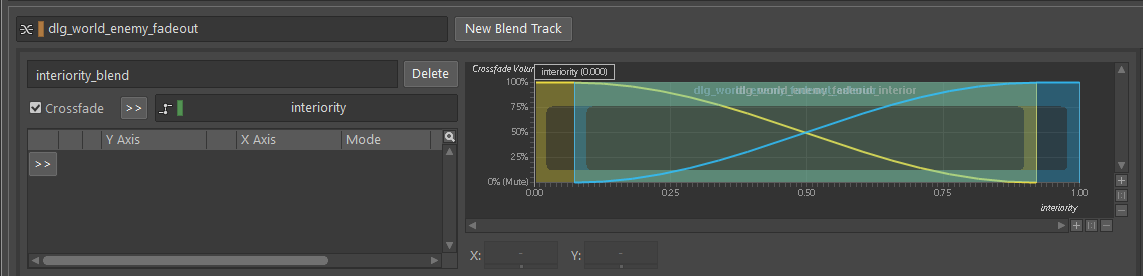

Our solution for this was to have these whispers exist in blend containers linked to whether the player was currently indoors (‘interiority’). The attenuation outdoors is about 10m further than the one indoors. This allowed us to keep the systemic pacing we’d tuned so carefully throughout the whole Dark Place with no additional complexity on the scripting side of things.

Monsters and Bosses

The most complicated implementation of enemy dialogue involved our boss fights. In most cases, bosses were able to teleport, go fully off-screen, or otherwise leave the reality the player exists in – but still needed to be present and menacing in the battle the entire time. Let’s take Cynthia as an example:

During this fight’s first phase, she spends most of her time underwater, totally invisible to the player. She could be anywhere – and we needed to communicate that she was still around, waiting for the player to make a mistake so she could catch them. On the engine’s side of this fight, she was completely hidden and stationary, so we could not make use of her in-game location to attach this dialogue to. In addition to this, Tor, the person Cynthia has stolen away to her underwater nightmare realm, is also shouting at the player throughout the fight. There’s a lot going on.

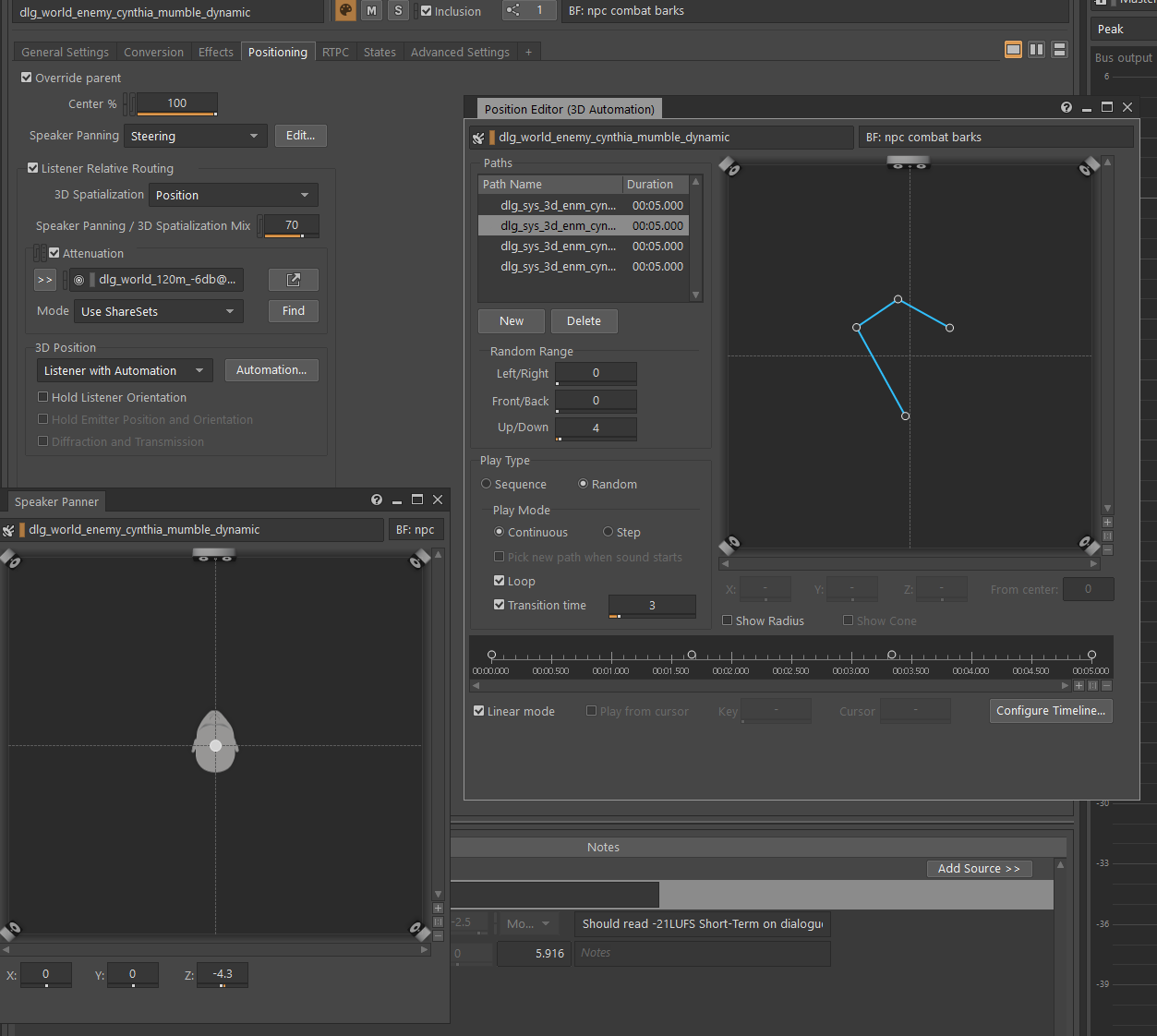

To convey all of this, we used a combination of Wwise’s “Listener with Automation” spatialization option with some fun custom convolution Impulse Responses (the same ones that are used as standard aux sends in the Dark Place) to make Cynthia sound like she was both underwater and still speaking to the player. While the player is not being actively chased, Cynthia’s dialogue loops, floating and rotating around the listener randomly, to give the feeling of surrounding the player. We used Wwise’s Steering spatialization type to force her position to always seem slightly under the player.

When Cynthia starts chasing the player, we revert to normal spatialization tied to her physical presence in the world, as at that point, the player needs to know exactly where to run away from.

Tor is static, centered and fixed below the arena, but with the same reverb as Cynthia so that the player can intuit that they are in the same general dimension.

We used similar tricks whenever other bosses go off-screen, as they often do – in Nightingale’s fight, the first boss fight in the game, he can teleport off-screen after a special attack and surprise the player by appearing from outside their vision to attack them again. The same kind of automation is applied to his dialogue while he’s off-screen to indicate that this is happening.

Audio-Driven Visuals

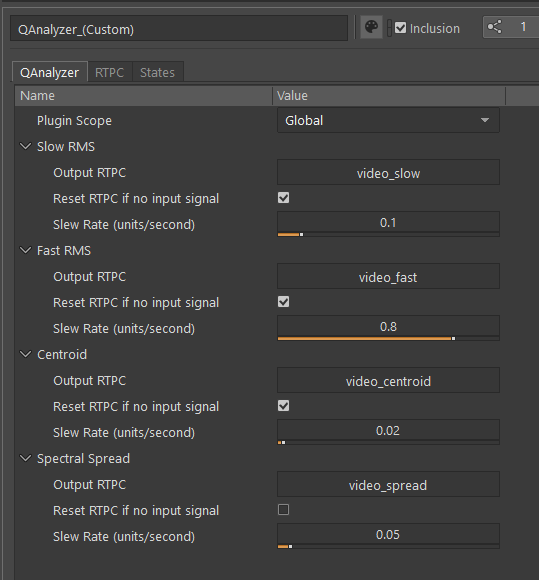

Dialogue was also used to drive visuals for certain core parts of the game – the Echo Scenes - ghostly images from other realities scattered around the Dark Place - and for certain types of manuscript pages that can be found throughout the game. This dialogue was routed to an FX send with a custom Wwise plugin, QAnalyzer, that allowed us to send the bus’s output RMS data to the engine at runtime:

These values were used by the VFX team to create the flickering, audio-responsive visuals that the player encounters in the game – and was yet another way to help us differentiate who was narrating in the current scene.

Echo Scene dialogue lived on its own dialogue type, spatialized to where the echo physically exists in the world, but with additional reverb to indicate that what you’re hearing isn’t from your current reality.

Attenuation, Occlusion, and Edge Cases

All this effort to spatialize things in interesting and distinct ways would be for nothing if we didn’t have appropriately detailed occlusion, obstruction, and propagation calculations. Without this, spoken dialogue would almost never sound naturally placed in the world, especially when the player moves around while dialogue is playing.

Obstruction and Occlusion calculations were a custom solution developed by our audio programmers, Iiro Rossell and Samuel Andresen. A series of acoustic probes, points in the world that the game can use to attempt to connect emitters with the listener via line trace, are placed in the world randomly at runtime.

These, in addition to manually placed probes in sensitive areas like doors and dense indoor geometry, are used to calculate obstruction and occlusion data based on a set of criteria:

- How far away the nearest probe is to the listener

- How many probes a sound would have to pass through from the emitter to reach the player

- Whether the probe’s path is interrupted by solid geometry, and if that material is acoustically transparent

If a sound is being occluded, this system will also physically move its emitter location to the nearest probe within direct line of sight to the player – emulating how sound travels around, not through, solid objects.

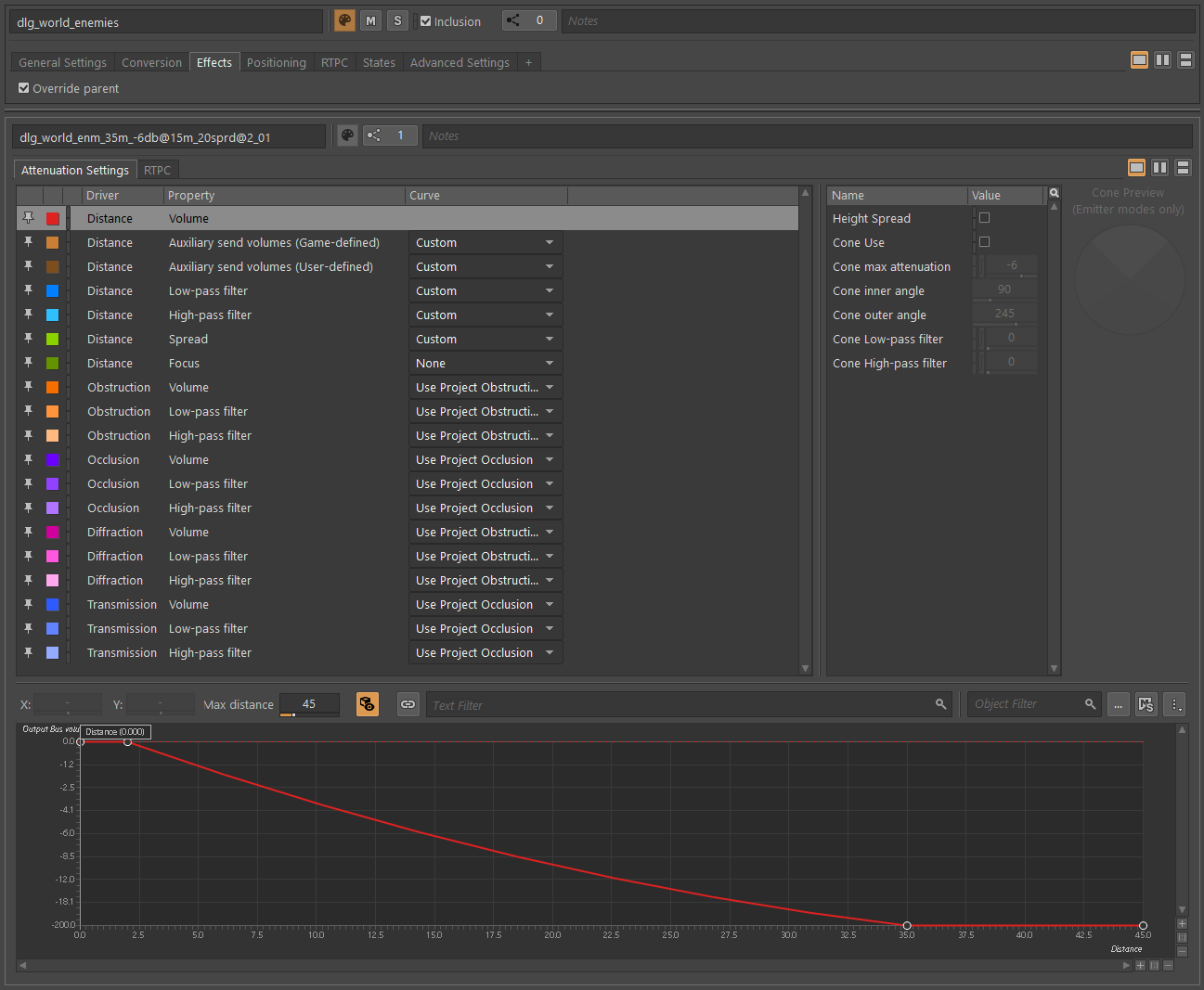

Using this data, obstruction and occlusion curves were defined on the dialogue type level. We had a standard set of attenuations for different audible distances used in most places in the game, with some overrides for special cases like when dialogue needed to have no occlusion/obstruction at all, or needed specific online processing.

We chose not to use cone attenuation and had very little spread for dialogue, as we felt that it made intelligibility and positioning feel inconsistent when characters moved their heads around.

A Word on Performance

Much of this article talks about fun implementation tricks and the technical details of what we did to enhance the feel of dialogue in the game – but before we close off the article, we wanted to emphasize that none of this would have mattered without the incredible performances from Matthew Porretta as Alan Wake, Melanie Liburd as Saga Anderson, any of our other major and boss characters, or our 21 wonderful enemy NPC actors who performed as the Taken. They all put their own personal twists on their characters, and without that, the dialogue would have none of the charm it does, no matter how much care and fun spatialization we applied. As the Taken’s lines came directly out of Wake’s manuscript pages, Sam Lake and Clay Murphy’s writing is also a huge part of the foundation that the implementation builds upon. No amount of technical polish can substitute for a great performance of great lines - it just lets those performances shine even more.

Conclusion

In a game like Alan Wake 2 that's full of wild, reality-breaking sequences and unique dialogue requirements to support them, the flexibility of Wwise’s systems pretty much let us do whatever we needed to, whenever we needed to. A lot of the implementation is not incredibly complex on its own, but needed to be tuned and thought through very carefully in the context of every other part of the game to not become overbearing or feel weird to the player in context. This level of hand-crafted implementation would not have been available to us without the functionality that Wwise offers.

Comments

Julio Alberto Larrea Crisóstomo

February 16, 2025 at 02:21 pm

I absolutely loved this post! The sound design and implementation in Alan Wake 2 are phenomenal—it creates an atmosphere that feels more like an immersive film than a traditional video game.